gibson:teaching:fall-2016:math753:ex3

Math 753/853 exercises 3: floating point numbers

1. Based on 9/7/2016's lecture material, what range of integers should a 16-bit integer type represent? Check your answer by running typemin(Int16) and typemax(Int16) in Julia.

2. Same as problem 1 for 32-bit integers.

3. The standard 32-bit floating-point type uses 1 bit for sign, 8 bits for exponents, and 23 bits for the mantissa.

- What is machine epsilon for a 32-bit float?

- How many digits of accuracy does the mantissa have?

- What are the minimum and maximum exponents, in base 10?

4. The standard 16-bit floating-point type uses 1 bit for sign, 5 bits for exponents, and 10 bits for the mantissa. What size error do you expect in a 16-bit computation of 9.4 - 9 - 0.4? Figure out how to do this 16-bit calculation in Julia and verify your expectation.

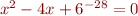

5. Find the roots of  to four significant digits.

to four significant digits.

gibson/teaching/fall-2016/math753/ex3.txt · Last modified: 2016/09/20 07:37 by gibson